Auditory Simulations

Reethee Madona Antony, Speech-Language-Hearing Sciences

Faculty Advisor: Brett Martin

NML Award: The History and Public Health Award (January 2013)

November 2012

A report on the 1 1/2 year process from imagining a digital solution to developing a research product

When I began working on Auditory Simulations at the New Media Lab in June of 2011, I could not have imagined how challenging a process it would be to create the tool I needed to solve my research questions. In fact, I did not even realize that that is what I would ultimately do. I had been studying the brain’s workings as it relates to hearing loss and the use of hearing aids. Hearing aid benefits can be evaluated using behavioral and electrophysiological measures. Cortical auditory evoked potentials (CAEPs) are brain responses that are evoked by sound. Evaluation of hearing aid amplification using CAEPs has been limited by lack of control of the digital signal processing characteristics (especially compression) in the hearing aid. To minimize the confounding variables, it is essential to simulate the effects of digital signal processing and to examine them in normal hearing listeners prior to examining them in a clinical population (hearing impaired listeners). At the New Media Lab, the creation of this simulation and the follow-up research was the goal of my project, Auditory Simulations.

The Auditory Simulations project had two main objectives: 1) to generate auditory simulations of degraded speech signals; 2) to develop a program that simulated the effects of a hearing aid so that I could conduct my research. However, when I joined the lab I had no clue about how to accomplish my objectives. It was a journey of almost three semesters…it still is, and I want to share this journey.

Immediately, I started looking at the possibilities of programming. I soon realized that I would be hindered because I had no background in this but I was determined to learn C programming. For the first couple of months, I spent time at the lab learning this language. It was a steep learning curve. Initially, things sounded like Greek and Latin but people at lab, especially Aaron Knoll, helped me in times of need. He had answers to almost any and every question that I posed. The open source software Code blocks was a useful resource for programming C.

Once I got a grip of the language, I started looking for books on how to write code for a filter, compressor, equalizer, etc. However, the limitation I faced was that while many books had mathematical expressions, they did not contain direct code. I realized that the next thing I had to do was to learn how to convert these formulas into programming code. This was too complex for me without a programming background. I wanted to enroll in programming courses and I contacted a CUNY professor at another campus. Most of the courses offered at other CUNY colleges were much too advanced and the professors advised that they may not be the best fit for me.

I tried to approach the issue from a different perspective by using existing software (eg: Adobe Soundbooth, Sound forge) and the advanced options they provided to simulate degraded speech signals and I was able to accomplish my goal. When I showed it to my mentor, she just had one question for me, “Do you know how these are accomplished? Can you see the code?” I simply had to reply, “No”. This is because in existing commercial software packages one cannot inspect what happens behind the screen or how a task is accomplished.

It was around this time when a simulation expert from UK, Dr. Lorenzo Picinali visited our department. I was more than happy to meet him and he helped me make my dream a reality. We collaborated using Max 6. It was a gradual process, as he would work in the U.K. and would email patches he created and I would tell him what still needed to be done and if I encountered bugs.

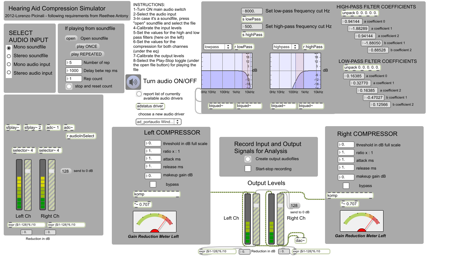

Meanwhile, I went through the tutorials on the Cycling74 website. Specifically, the tutorial on Max 5 guitar processor was very helpful and it walked me through developing a patch. Finally, after a series of revisions, the patch that met my requirements, and in which the output could be recorded, was created. The figure shown below is a current version of the software created. The patch will now be used to examine the effects of digital signal processing on CAEP using listeners with normal hearing and in listeners with hearing loss. I hope to find some interesting results that are publishable and would pave the way to further investigations.